If you work in digital media, you know the “Stock Footage Purgatory.”

It is 11:00 PM. You need a 5-second clip to transition between scenes in your video. You need something specific: “A futuristic city with green energy plants, peaceful atmosphere, drone shot.”

You open a stock footage site. You type in the keywords. You scroll. You see the same generic clips that three of your competitors have already used. You scroll more. You find one that is okay, but it costs $79 for a single license. You buy it, feeling defeated, because it wasn’t exactly what you wanted—it was just the best of what was available.

This workflow—the act of searching for content—is becoming obsolete.

As we settle into the reality of 2026, the paradigm has shifted. We are no longer hunters scavenging for visual assets; we are architects building them.

The Sora AI Video Maker has effectively replaced the search bar with a prompt box.

I have been stress-testing this tool specifically as an “Asset Generator,” and the implications for marketing, branding, and rapid content production are staggering.

From “Stock” to “Bespoke”: The Customization Revolution

The core problem with traditional stock footage is that it is rigid. You cannot change the lighting. You cannot change the camera angle. You cannot tell the actor to smile less.

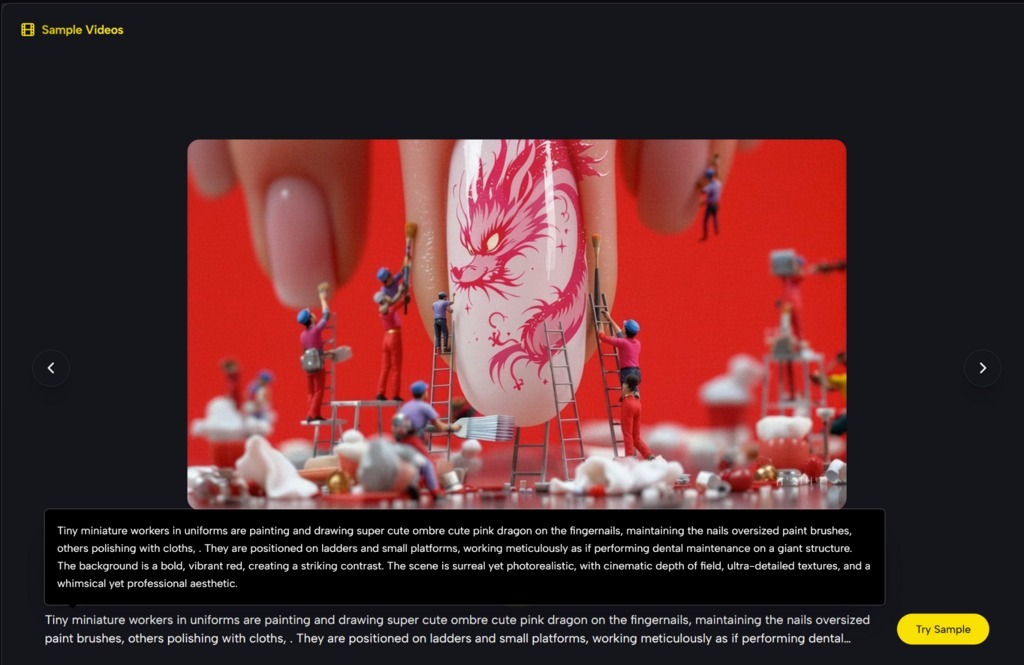

The Sora 2 model changes this by introducing Dynamic Asset Generation.

In my recent tests, I wanted to see if I could replace a standard subscription library entirely. I needed a clip of “A golden retriever running through a field of lavender at sunset.”

- Attempt 1 (The Old Way): I searched a popular stock site. I found a dog in a field of grass. I found a dog in a field of wheat. I found a lavender field without a dog. I found nothing that combined all three elements perfectly.

- Attempt 2 (The Sora 2 Way): I typed the exact prompt into the SuperMaker interface.

- Result: Within 60 seconds, I had the clip. The physics of the dog’s ears flapping in the wind were accurate. The lighting was exactly “golden hour” as requested. Most importantly, the lavender brushed against the dog’s fur, reacting to the collision.

- Result: Within 60 seconds, I had the clip. The physics of the dog’s ears flapping in the wind were accurate. The lighting was exactly “golden hour” as requested. Most importantly, the lavender brushed against the dog’s fur, reacting to the collision.

This is the power of the Sora 2 engine: It doesn’t retrieve; it synthesizes. It understands that “lavender” is a physical object that should move when a “dog” runs through it.

The “Image-to-Video” Loop: A Marketer’s Dream

While text-to-video is impressive, the Image-to-Video feature is where the real commercial value lies. This is the feature that allows brands to maintain consistency.

Let’s say you are launching a new coffee brand. You have a high-quality product photo of your coffee bag.

The Workflow:

- Upload: You upload the static product shot to the tool.

- Prompt: You add a text instruction: “Zoom out slowly, steam rising from the top, coffee beans falling in slow motion around the bag, cinematic lighting.”

- Generate: The AI keeps your product packaging exact (no morphing of the logo) but animates the environment around it.

I tested this with a mock perfume bottle. The AI correctly identified the glass texture and generated realistic reflections of the environment moving across the surface of the bottle. This allows a single product photo to be turned into 10 different video ads—one with rain, one with sun, one with smoke—without ever booking a studio shoot.

The Economics of Attention: A Cost-Benefit Analysis

Why switch? It is not just about “cool tech.” It is about the bottom line. The cost of attention has gone up, but the budget for production usually hasn’t.

Here is how the Sora 2 workflow compares to the traditional “Stock Hunter” workflow for a standard 1-minute social media video requiring 5 background clips.

| Metric | Traditional Stock Workflow | Sora 2 |

| Discovery Time | 2–3 Hours (Searching & filtering) | 10–15 Minutes (Prompting) |

| Asset Cost | $150–$500 (Licensing fees) | Free / Minimal Credit Cost |

| Exclusivity | Low (Anyone can buy the same clip) | High (Generated uniquely for you) |

| Visual Consistency | Low (Clips from different cameras/color grades) | High (You define the style in the prompt) |

| Customization | Zero (What you see is what you get) | Infinite (Change weather, angle, speed) |

The “Brand Safety” of Sora 2

A key observation from my testing is the stability of the new model. In 2024, using AI for a brand was risky because the video might glitch in a horrifying way. Sora 2 has reached a level of physics stability where the motion is smooth and predictable enough for commercial use. The water flows like water; the clouds drift like clouds. It feels “safe” to put a logo next to it.

Navigating the “Beta” Reality

However, we must be honest about the current state of the technology. It is a powerful tool, but it is not a clairvoyant one.

1. The “Prompt Engineering” Curve

You cannot be lazy with your words. If you type “Man eating burger,” Sora 2 might generate a burger the size of a car, or a man eating it with a fork. You need to learn the language of the director. You need to specify “Medium shot, natural lighting, realistic proportions, man taking a bite of a cheeseburger.” The quality of the output is directly tied to the quality of your input.

2. The “Text” Blind Spot

While the physics are great, the AI still struggles to generate legible text inside the video. If you want a neon sign that says “OPEN,” it might come out as “OPEEN” or “0P3N.” For now, use the AI for the visuals, and use your editing software for the typography.

3. The “Loop” Limitation

Currently, creating a perfectly seamless loop (for a website background) is tricky. The 10-15 second clips have a definite start and end. You will need to use a cross-dissolve in your editing software to make it repeat smoothly.

Strategic Application: The “A/B Testing” Machine

The true power of this tool in 2026 is Volume.

In traditional marketing, you make one video and hope it works. With Sora 2, you can generate variations.

- Variation A: The product in a sunny meadow.

- Variation B: The product in a neon city.

- Variation C: The product in a cozy living room.

You can generate all three in under 10 minutes, post them, and see which one resonates with your audience. You are no longer guessing what aesthetic your audience likes; you are testing it in real-time with zero additional production cost.

Conclusion: Own Your Visuals

The era of relying on other people’s footage is ending.

AI Video Generator Agent represents a shift from consumption to creation. It allows you to stop compromising on your vision because you couldn’t find the right clip.

It gives you the power to be the sole owner of your visual assets. No copyright strikes, no licensing expiration dates, and no seeing your “unique” background video in a competitor’s ad.

In 2026, the most valuable asset isn’t a subscription to a stock library; it’s your ability to imagine a scene and the skill to describe it. The prompt box is open—what will you build?