You know the feeling. It hits you while you’re driving, or perhaps while you’re standing in the shower. A melody. A rhythm. A few lines of lyrics that perfectly capture exactly how you’re feeling in that moment. It loops in your head, vibrant and alive, accompanied by imaginary drums and swelling violins.

But the moment you step out of the shower or turn off the car ignition, the music dies.

Why? Because for the vast majority of us, the bridge between the music in our heads and the speakers in our ears is broken. Unless you have spent years mastering music theory, thousands of dollars on studio equipment, or have the budget to hire a session band, your ideas remain just that—ideas. They are ghosts of songs that never get to live.

For decades, this was the accepted reality. Music creation was a walled garden, guarded by technical proficiency and high costs. But recently, I’ve been exploring a shift in the digital landscape that suggests these walls are crumbling. We are entering the age of “Generative Audio,” where the barrier to entry isn’t skill—it’s imagination.

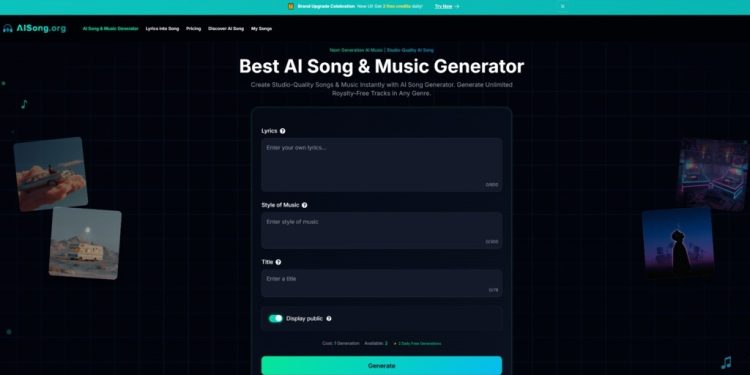

In my recent exploration of this emerging tech, I spent considerable time conducting a comprehensive AI Song review. My goal was to see if it could truly translate raw text into emotional audio, or if it was just another digital novelty. What I found was a tool that, while not without its quirks, offers a fascinating glimpse into the future of human expression.

The Shift: From Passive Listening to Active Direction

To understand the potential here, we have to change how we view our role. In the traditional model, you are the listener. In the AI model, you become the Director.

Think of it like the revolution in photography. Before the smartphone, capturing a high-quality image required a DSLR camera and knowledge of aperture and ISO. Today, the software handles the physics, allowing you to focus on the composition.

AISong attempts to do the same for audio. It doesn’t ask you to play the guitar; it asks you what kind of guitar you want to hear. It doesn’t ask you to sing on pitch; it asks you what the lyrics should say.

The “Text-First” Philosophy

In my testing, the most distinct feature of this platform was its adherence to a “Lyrics-First” architecture. Many generative tools start with a beat and try to shoehorn words into it. This engine appears to work in reverse: it analyzes the prosody—the natural rhythm and stress—of your written text and builds the musical structure around it.

In the Lab: My Personal Experience with the Engine

I wanted to move beyond the marketing claims and test the “emotional intelligence” of the system. I decided to run a specific experiment using a set of melancholic, reflective lyrics I wrote about “urban isolation.”

The Setup

I pasted the text into the interface. Instead of choosing a generic “Pop” tag, I utilized the custom prompt feature. I requested: “Lo-fi acoustic folk, slow tempo, rainy atmosphere, male vocal, intimate production.”

The Result

When I hit generate, I expected a robotic, disjointed result. What I got was surprisingly cohesive.

- The Vibe: The system correctly interpreted “rainy atmosphere” not just as a sound effect, but as a mood. The chord progression was minor and unresolved, creating tension.

- The Vocals: This is where the technology is most interesting. The voice didn’t just read the words; it elongated the vowels at the end of the lines. It paused for breath.

However, it is important to frame this accurately. Was it indistinguishable from a Grammy-winning artist? No. But was it a convincing, emotionally resonant demo that captured the feeling I described? Absolutely. It felt less like a computer generating code and more like a session musician improvising on a theme.

Comparative Analysis: The New Creative Stack

To help you visualize where this technology fits into the current ecosystem, I’ve broken down the differences between traditional methods, stock libraries, and the generative approach.

| Feature | Traditional Studio Production | Stock Music Libraries | AI Song Generation |

| Creative Control | Absolute (Every note is yours) | Low (You take what exists) | High (You direct the output) |

| Barrier to Entry | Years of practice / High Cost | Monthly Subscription | Zero Skill / Low Cost |

| Time to Result | Weeks or Months | Hours of searching | Minutes |

| Lyrical Customization | 100% Custom | None (Instrumental mostly) | 100% Custom |

| Ownership Rights | Complex (Splits/Royalties) | Leased (Non-exclusive) | Full Commercial Ownership |

| Uniqueness | Unique | Generic (Used by thousands) | Unique Generation |

The “Ownership” Advantage

One specific detail that stood out during my review of the platform’s terms was the approach to copyright. In an era where creators are constantly battling DMCA strikes, the promise of Full Commercial Rights is significant. It means the track you generate is yours to use on YouTube, Spotify, or in ads, removing the legal friction that usually plagues content creation.

Beyond the Hype: A Realistic Look at Limitations

To be a truly useful guide, we must discuss the friction points. While the technology feels like magic when it works, it is not flawless.

1. The “Gacha” Mechanic

Generative AI often operates like a slot machine. In my tests, I sometimes had to generate a song three or four times to get a result where the melody truly “clicked” with the lyrics. The first attempt might be too fast, or the second might have a strange drum fill. It requires patience and iteration.

2. The “Uncanny Valley” of Vocals

While the vocals are impressive, they occasionally slip into the “uncanny valley.” You might hear a slight metallic sheen on high notes, or a mispronunciation of a complex proper noun. It is getting better rapidly—referencing recent papers on neural audio synthesis, the gap is closing—but for now, audiophiles will still be able to tell the difference between a human breath and a simulated one.

3. Structural Rigidity

The AI excels at standard verse-chorus structures. If you try to feed it an avant-garde, non-linear poem with no clear rhythm, the engine can get confused, resulting in a melody that feels meandering.

Who Is This Actually For?

After spending a week with the tool, I believe three specific groups stand to gain the most from this technology right now.

The Content Creator & Marketer

If you are making video essays, TikToks, or product ads, finding the right background music is a nightmare. You either pay for expensive licenses or risk copyright strikes. Generating a custom track that perfectly matches the tempo of your edit is a massive workflow hack.

The Storyteller & Writer

For poets and lyricists who don’t play instruments, this is a validation engine. It allows you to finally hear your words in the context they were meant for. It transforms text on a page into an experience.

The Educator

I’ve seen innovative use cases where teachers use these tools to turn historical facts or language lessons into catchy songs. Memory is linked to melody; turning a dry lesson into a song can drastically improve retention.

The Verdict: A New Instrument for a New Era

We are living through a democratization of creativity. Just as word processors didn’t replace writers, AI Song won’t replace musicians. Instead, it creates a new lane.

It is a tool for the “Silent Songwriter”—the person with the soul of an artist but without the hands of a pianist.

It invites you to stop letting your ideas evaporate. It invites you to collaborate with the machine. My advice? Don’t look at it as a replacement for your favorite band. Look at it as a sketchpad.

Go find that poem you wrote in high school. The one you were secretly proud of. Paste it into the engine. You might be surprised by the voice that answers back.